Written by Dr. Swaminathan Krishnan, Senior Data Scientist, Illumination Works

Time series capture the variation of physical measurements over time. Because time is eternal and change is the only constant in nature, time series data are ubiquitous. What’s more, the urge for humans to know or be able to predict the future has existed from time immemorial, perhaps motivated by vanity, perhaps by curiosity, but often driven by an instinct to survive. This holds true to date, and, if anything, the need to forecast the future has only skyrocketed.

Forecasting with Rigor

The mushrooming of cheap sensors coupled with the concurrent evolution of cloud infrastructure is leading to a densely instrumented, highly interconnected world. With these sensors continuously emitting time-series data, this data type constitutes a massive fraction of the 149+ zettabytes of data created globally. While data collection and curation has attained steady state, leveraging this data through forecasting of events and processes is just starting to pick up pace.

So, what exactly is a time series and what does time-series forecasting entail? This article provides an easy-to-understand primer that delves into these questions so you can see how you might leverage this technology for advancing your business and addressing mission needs.

Introduction to Time Series

What is a Time Series? A time series is the most ubiquitous data that you can find on the planet. Fundamentally, it’s a type of data used to understand the dynamics in real-world systems. It’s an ordered set of measurements of a physical quantity over time, usually measured at equally spaced time steps. Examples of a time series include the spot price of a company stock, the temperature at a given location, the number of books sold on Amazon daily, the monthly median sale price of single-family homes in the US, hourly pollen count in grains per cubic meter at a given location, and the yearly global average carbon dioxide concentration in the atmosphere measured in parts per million.

A time series can be conveniently visualized on a plot with the X axis representing the time scale and the Y axis tracking the physical quantity. At first glance, the series appears to have no inherent beauty—no definitive structure, it looks very random. But, in fact, a time series is like an onion, dull, boring, and, frankly, stinking, but when the layers are peeled back, the beauty in its

layers is revealed. There’s a lot of intricate structure that holds deep secrets, great information regarding the underlying phenomenon or process or physical measure. What’s more, just as no two onions are the same, so too are no two series identical, rendering the scope of the field of time series analysis vast and fascinating, but challenging.

Why Time-Series Analysis. Time-series analysis equips decision makers with the ability to make informed decisions based on data-driven quantitative reasoning rather than business heuristics (personal experience)—a reasoning that leads not only to a prediction, but also to the uncertainty associated with that prediction so risks can be ascertained and evaluated in pragmatic fashion informed by data. Data science (DS) can play a big role in predictions and can provide reasoning that leads not only to a prediction but also provides the quantitative uncertainty associated with that prediction so a stakeholder may include (or discard) the forecast in decision making in accordance with their risk averseness. There are a lot of applications for time-series analysis such as:

- Logistics & Transportation – Forecasting of shipped package

- Retail/Ecommerce – Inventory forecasting and promotion/pricing optimization

- Insurance – Claims prediction and premium pricing optimization

- Manufacturing & Operations – Predictive maintenance

- Energy and Utilities – Electric grid load forecasting

What’s Special About Time Series Data? A time series is an ordered set and needs to remain ordered to have meaning. The order of the measurements cannot be changed or jumbled when studying the quantity’s variation over time. For most physical measures, the future is dependent on the past, and, therefore, it is important to maintain this order.

If you’re serious about unlocking the full potential of your data, understanding time series is non-negotiable. From sensors, transactions, user activity, and environmental conditions to maintenance, performance, and movement data, important insights unfold over time, and most organizations have the ability to harness them. Time-series analysis isn’t just about looking back, it’s about leveraging the patterns hidden in temporal data to predict what comes next, with data-driven confidence.

The history-dependent evolution of the time series is also the reason why it is also referred to as a time history in literature. One way to approach time series forecasting is to think about the future value of the physical quantity as being equal to the past value plus a stochastic, or random fluctuation. The challenge of forecasting then boils down to characterizing and estimating this random fluctuation.

Time-Series Forecasting. Time-series forecasting captures the relationship of the future to the past by casting a functional structure to the random fluctuations to predict the future. Who doesn’t want to predict the future, right? This is where we start to peel back the layers of the onion, one layer at a time, by following four key steps:

- Graphically visualize the time series data to gain insight into the variation of the physical quantity over time

- Identify key features (the layers) of the data and interpret them

- Determine transformations and functional relations that can help forecast or predict the future given past values (the unpeeling) and develop a forecasting model

- Test the forecasting model via simulation and develop confidence metrics (uncertainty quantification) that quantify the likelihood of the forecast (to help the user ascertain how reliable the prediction is)

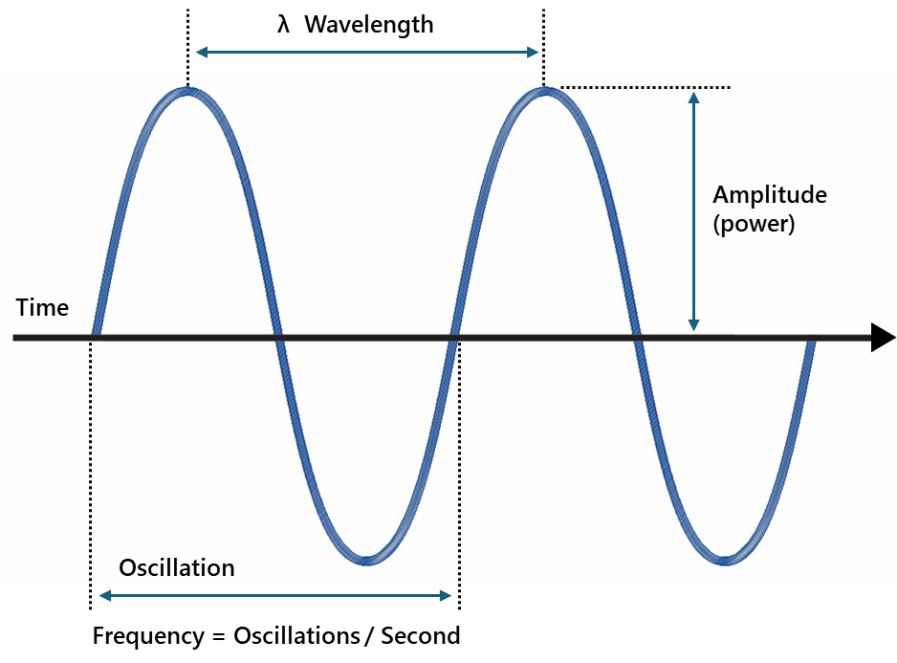

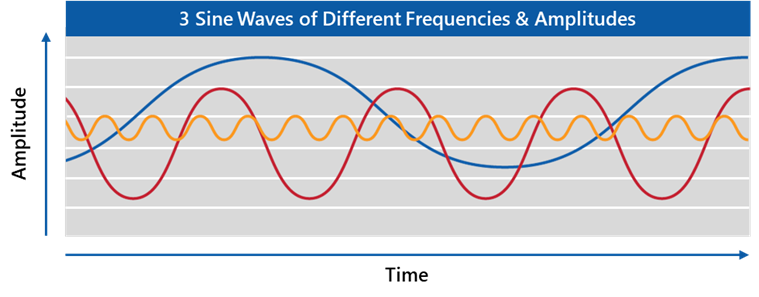

Time-Series Characterization. A time series is characterized by three fundamental building blocks: frequency, amplitude, and duration. Frequency is the number of cycles per second. A cycle comprises one positive excursion or phase and one negative phase. Amplitude refers to the heights of crests and troughs in the waveform; it indicates how strong the signal is. Duration or wavelength refers to the total length of the record in time units, or how long the waveform or time history lasts.

In the real world, frequency and amplitude seldom remain constant over time. This is what makes the time series appear noisy. The question is, can we make sense of this noise? That becomes our goal, to make sense of that noise.

Features of a Time Series

Time Series Decomposed. Time series observations of real-world quantities often include tractable features. It is critical to identify these features so that DS/machine learning (ML) solutions may be suitably tailored. One natural way to dissect a time series is to decompose it into three components: the trend (long-term direction), the seasonality (systematic, calendar-related movements), and the irregular short-term fluctuations (i.e., unsystematic, often stochastic or random; volatility). Trends and seasonal variations may or may not be present in significant measures, but most real-world measurements contain a significant dose of the irregular component, perhaps the most difficult aspect to model and which presents the greatest challenge for DS/ML solutions.

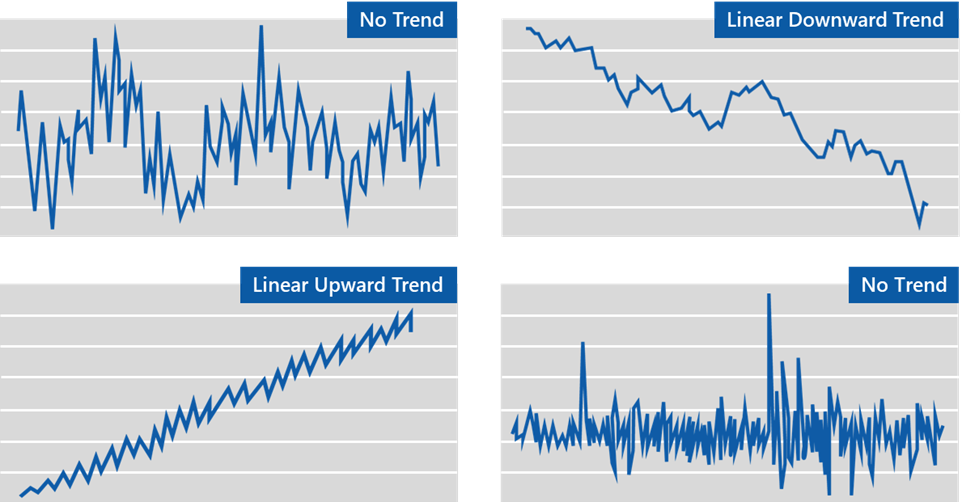

Deterministic Trends. Looking for a deterministic trend is like peeling back of the first layer of the onion. It can be visually assessed and is expressed as a predictable, systematic pattern in the data that evolves over time and does not change randomly. Deterministic trends are not influenced by random shocks along the way. It may be the result of monotonically growing or shrinking (over a given period) influences, such as population growth. Trends in a time series may be removed by finding a polynomial, logarithmic, or exponential function that best fits the time series (using regression) and subtracting this function (sampled at the same time steps as the time series) from the time series. This step is known as detrending the time series.

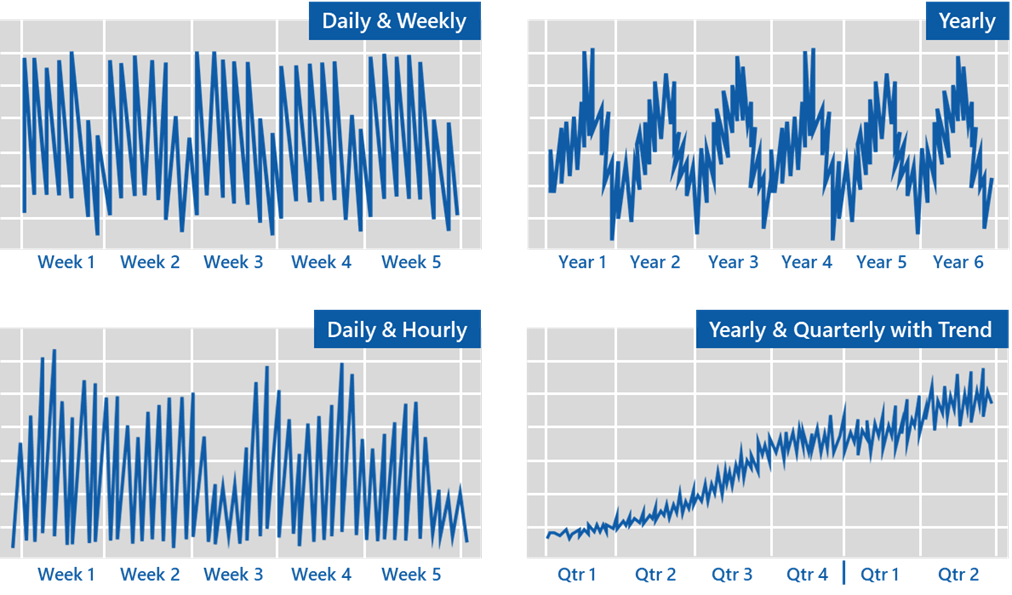

Deterministic Seasonality. Looking for seasonal patterns is like peeling back of the next layer of the onion. This too can usually be visually identified. When a time-series pattern repeats itself at regular time intervals, the underlying process is said to be periodic, and a seasonal effect is said to be present, such as a sharp increase in retail sales during the Christmas holiday season or an increase in the demand for flights during the summer. For a predictive algorithm to be uniformly effective throughout the year, systematic seasonal effects must be removed from the time series. Otherwise, the ML model would be highly influenced/controlled by the large amplitude segments of the time series (during peak season) and would have little predictability power over the lean season segments of the signal. Seasonal effects may obscure the underlying movement in the series, especially nonseasonal characteristics that may be of interest. Seasonality in a time series can be identified by regularly spaced peaks and troughs that have a consistent direction and approximately the same magnitude each year, relative to the trend.

Modeling Periodicity Using the Fourier Series. Here is where we peel back the next layer of the onion, and DS begins adding value with a mathematical transformation that breaks the time series into individual components. These components may take the form of trigonometric functions (sines and cosines) of different frequencies, and the weighted sum of these functions is known as the Fourier Series decomponsition. One can then view the time series in the frequency domain instead of the time domain in the form of a frequency (or a Fourier) spectrum, a plot of the intensity of the signal as a function of the frequencies of the individual components.

Peaks will be observed in the spectrum centered at the frequencies corresponding to the frequencies of the cosine and sine waveforms that combine to produce the original time series. A spectrum that consists of a single cluster of closely spaced peaks concentrated within a narrow range of frequencies is termed a narrow-band signal. If the frequencies of the peaks are spread apart over a broad range, the signal is termed a broad-band signal. The strength (heights of the peaks) of the signal in the frequency domain is referred to as the power of the signal (as opposed to amplitude in the time domain). One fast technique to compute the Fourier series decomposition and the Fourier spectrum is the Fast Fourier transform (FFT).

Time-series analysis capabilities have shifted with the advent of modern cloud-based data tools and the prevalence of high-volume data. Traditionally, systems were batch-only and forecasts occurred infrequently. Now, with the modern cloud data stack, data is continuously ingested, processed, and used for prediction. The decisions happen in real time and future awareness occurs simultaneously, which allows for predictions to update continuously as new data arrives. Essentially, predictions become just another streaming feature, enabling continuous decision-making systems where real-time state and future predictions coexist in the same pipeline.

Frequency domain analysis is useful when the underlying physical process is characterized by the frequencies in the signal. For instance, an electrocardiogram (ECG), which is a graphical representation of the electrical activity of the heart, is an electrical signal that varies over time. The frequency (and timing) information in an ECG signal can help detect cardiac arrhythmia (ARR) and congestive heart failure (CHF), with the signals having distinct features in the frequency domain as compared to that of a normal sinus rhythm (NSR). Applying a Fourier (or a wavelet) transform to the data and using the power and the frequency of the peaks from the spectra as features, one can diagnose ARR and CHF with a certain degree of confidence.

Additionally, frequency domain representation of a time series can help us isolate/identify the frequency band of noise present in the time series. Effective filters can then be designed and applied to the time series to separate signal from noise. Such preprocessing can significantly improve the effectiveness of learning algorithms.

Time-Series Modeling Approaches

Separating the Deterministic from the Stochastic. When deterministic trends and seasonality are removed from the time series, what remains is the random or stochastic fluctuations. Deterministic trends and seasonality typically are what constitute human business insights that businesses often rely on in making decisions on future actions. These can be discerned visually or heuristically. Where DS can add true value is in characterizing the stochastic fluctuations, extracting intricate structure above and beyond.

Modeling the Random Fluctuations Because the future is dependent on the past, many real-world processes are auto regressive, meaning the future can be linearly regressed from the past, such as the values of the physical quantity at future time steps may be determined by a linear regression on one or more past values. This simple classical model is called the auto-regressive (AR) model. By adding the stochastic residual to the past, random fluctuation can be modeled. AR models are the building blocks in many complex models representing real-world phenomena—a main value add that DS brings by further lowering uncertainty and mitigating risks in forecasts.

Classical models, such as the AR model, work well when the randomness is stationary, not if it is non-stationary. A time series, or more accurately, the underlying process, is said to be stationary if its statistical properties (i.e., mean, variance, and higher order moments) do not change over time. For instance, in simple terms, the nature of the underlying process is unchanging over time, there are no sudden jumps in the time series.

Techniques such as differencing the time series (or the logarithm of the series) once or more, the process of computing the differences between consecutive observations, may make the time series stationary allowing for classical methods to be used in forecasting. In addition to the AR models, classical methods include Exponential Smoothing (without and with trends and seasonality), Moving Average (MA), Auto-Regressive Moving Average (ARMA), Auto-Regressive Integrated Moving Average (ARIMA), Box-Jenkins, Generalized Auto-Regressive Conditional Heteroskedasticity (GARCH), and Generalized Additive Models (GAMs) such as Facebook’s Prophet.

Modern (decision tree-based ensemble models) & Cutting Edge (deep learning-based models). Traditional tree-based ML approaches work well on datasets where the datapoints (rows) are independent of each other. However, because most time series represent processes where the current values are dependent on past values, this history dependence must be captured. This can be achieved by taking N past values of the time series as N features to be used in the prediction of the current value of the time series. These lag features can then be combined with other features in formulating the forecasting model, features such as the calendar day of the week and other exogenous features such as the weather that may affect the process underlying the generation of the time series. Tree-based ensembles, in theory, should be able to capture seasonality features. There is no need to eliminate/model seasonality separately, although modeling seasonality can still help increase the sensitivity of the tree-based model.

Time series are history dependent, therefore, order matters and this order needs to be preserved. We do this using the notion of time windows. The entire time series data can be broken into train/test data chunks using time windows that are either overlapping or non-overlapping. Traditional cross-validation techniques can now be used on these windowed datasets (with order preserved). For multi-step forecasting (forecasting the values of the labels over multiple time steps in the future), we can apply a single-step forecasting model with a one-step lag feature recursively after appending the predicted label at that single time step to the features to be used in predicting the label for the next step. Alternately, one could use multiple tree-based models, one for each of the time-steps that are to be predicted in the future.

The single-step and multi-step forecasting strategy using decision tree-based ensemble models can be used in time-series forecasting using cutting-edge deep-learning networks, such as convolutional neural networks (CNN), recurrent neural networks (RNN), and long short-term memory (LSTM) networks. As in the traditional and modern methods, removing trends, normalizing the data, and applying the feature engineering strategies described previously also apply here and typically lead to better results. Since random weight initializations, non-deterministic GPU operations, overfitting to the noise in training data rather than fitting to the signal and data non-stationarity can sometimes lead to instability in deep learning models, where small changes can lead to significantly different or erratic predictions, it is good practice to have a baseline model based on other standard techniques (such as ARIMA) to compare against and discard wild forecasts if necessary. The design of the network must be done systematically starting with a simple structure and progressively increasing model complexity and terminating the process when the model starts to overfit to the training data.

Time-Series Applications Beyond Forecasting

Data Mining. Beyond forecasting, there exists a class of time-series applications that fall under the umbrella of data mining. These include:

- Indexing – Given a query time series and some similarity/dissimilarity measure, the goal is to find the most similar time series in a database

- Clustering – Finding natural groupings of the time series in a database under some similarity/dissimilarity measure

- Classification – Classifying a time series as belonging to one of two or more classes

- Summarization – Stripping a time series for its essential features and approximating it in a lean fashion

- Anomaly Detection – Given a time series and some model of normal behavior, the goal is to find all sections that contain anomalies or surprising/interesting/unexpected/novel behavior

Each of these applications hinges on our ability to characterize the time series (i.e., extract features that are representative of the time series itself or the process generating the time series) and the lessons outlined for forecasting apply equally.

Final Thoughts

Fundamental Realities of Time Series Forecasting:

- Time series are inherently random and seldom deterministic

- The future depends on history, but the future usually does not repeat history

- Every forecasting exercise must be accompanied by uncertainty quantification

- The farther out into the future you wish to predict, the farther back you must investigate history

- As you increase the forecasting horizon, the reliability of your prediction drops

- Time series seldom evolve in isolation – there are often multiple time series that are interdependent (multivariate time-series analysis)

ILW Experience

From Sensor Data to Action – Predicting Failures Before They Happen. ILW has brought its expertise in time-series forecasting to a wide range of offerings within both the Defense and Commercial Divisions.

Case Study 1. In the Defense arena, ILW has applied time-series techniques on:

- Machine vibration data to predict failures and raise preventive maintenance alerts as and when needs arise

- Aircraft fleet utilization data to predict maintenance metrics such as repair hours and actions

- Inherent and induced failures and corrosions in aircraft components

Case Study 2. In ongoing work, the ILW’s Data Analytics as a Service (DAaaS) team is currently developing a variety of solutions for the US Air Force Sustainment Center (AFSC) applying some of these time-series techniques to vibration sensor measurements (accelerations, velocities, displacements, temperature, etc.). These include anomaly detection tools to identify faults and preventative maintenance tools that raise alerts when a maintenance action is deemed necessary for CNC machines (lathes and mills), dust collectors, vacuum pumps, etc., used by the AFSC to repair and overhaul aircraft parts and assemblies. These diagnostic and predictive tools have the potential to significantly lower machine downtime/repair time and avoid costly misdiagnosis and repeat trips to maintenance shops.

Case Study 3. In the commercial space, ILW has been able to assist clients with product pricing based on historical customer transaction patterns and choosing optimal additive injections in the chemical processing of sludge.

“In an industrial environmental system, ILW demonstrated the practical application of time series analysis in improving operational efficiency. By leveraging insights from real-time data, our client was able to adjust on-demand treatment patterns to reduce unnecessary waste, leading to significant productivity gains.”

“Time-series analysis, when combined with human insight and decision making, enabled our commercial client to drive informed decisions about chemical usage, minimizing waste and maximizing productivity through real-time predictions of chemical set points.”

– Surendra Karnatapu, Senior Data Scientist, Illumination Works

-About the Author

Swaminathan Krishnan, PhD, is a Civil (Structural) Engineer by training and has bachelors, masters, and doctoral degrees in that field. After finishing his training, Dr. Krishnan remained in academia for several years where he held assistant and associate professor positions at Caltech and Manhattan University, teaching and conducting research in dynamics, vibrations, and computational mechanics. He was awarded the prestigious Fulbright academic and professional excellence award in 2014. This took him to the Indian Institute of Technology for a year as a visiting professor, following which he spent a few years at a multinational engineering firm as a Consulting Engineer. Dr. Krishnan brings rich skills in time-series analysis, anomaly detection, numerical simulation, digital twin modeling, and optimization. Since joining ILW, he has leveraged his DS expertise, particularly in time-series forecasting, alongside his strong programming skills to enhance the capabilities delivered to our defense and commercial customers. Dr. Krishnan also contributes positively to the DS Practice and ILW as a whole, and his excellent contributions were recognized with the Brightest Light Award from ILW in 2023.

Learn More

If you liked this article, you may also like:

- Uncertainty Estimation in AI

- Uncertainty in AI Domains

- Psychology of Decision-Driven BI/BA

- Navigating the Maze of AI Cyber Risks

Special thanks to the technical reviewers of this article:

- John Tribble, Director of Data Science

- Janette Steets, Associate Vice President, Defense Division

- Erica Swanson, Senior Data Scientist

- Surendra Karnatapu, Senior Data Scientist

About Illumination Works

Illumination Works is a trusted technology partner in user-centric digital transformation, delivering impactful business results to clients through a wide range of services including big data information frameworks, data science, data visualization, and application/cloud development, all while focusing the approach on the end-user perspective. Established in 2006, the Illumination Works headquarters is in Beavercreek, Ohio. In 2020, Illumination Works adopted a hybrid work model and currently has employees in 20+ states and is actively recruiting.

Check out the our Newsroom for more company highlights!