With data volume growing rapidly, creating structure and organization to extract maximum value and insights from large data sets is crucial. Solid data taxonomies are a must for meaningful data usage, but creating taxonomies can often be a time-consuming and difficult task.

This article proposes a novel perspective on automating taxonomy construction by applying data science algorithms to database queries

A Journey into Data Organization

Data Taxonomies

Data is increasing exponentially in size and in kind. At present, even small organizations collect and attempt to aggregate large amounts of data from disparate sources, ranging from machine and sensor data (IoT) to online data and even manually annotated crowdsourced data. Governments try to legislate it, organizations create positions to make the most of it, and everyone values it for knowledge and insights.

As data grows within an organization, so does the number of users, and likewise the number of use cases. In the process, variables within the data become different things to different stakeholders. For the data to be easily located, analyzed, and communicated across different departments and teams, establishing clear definitions, classifications, and hierarchies of those variables becomes very important to the success of the organization. Taxonomizes provide this structure.

Data Taxonomies & Why They are Useful

Data taxonomies are classification systems that can help organizations improve data governance, data quality, and overall data management processes. A taxonomy comprises a hierarchical classification or categorization system leveraged to organize and structure an organization’s data through categories, subcategories, and metadata associated with each data type.

In other words, a taxonomy is defined using a semantic data model, where rules, values, and relationships between attributes are part of the overall hierarchical structure. When constructed correctly, a data taxonomy provides a systematic and structured framework to classify and label different types of data based on their characteristics, attributes, or usage. A data taxonomy might be designed based on business functions, data sources, data formats, or other relevant criteria that suit the needs of the individuals with various roles within an organization. Taxonomies are based on the function of the data or use cases, and not the data values themselves.

Tackling Taxonomy Challenges with AI

A Data Maze of Confusion

Manually generating a good taxonomy is almost impossible because different users of taxonomized data will view the data differently. Consequently, building a taxonomy can be a confusing and contentious process, even or especially when relevant stakeholders are involved.

For instance, machine parts are notoriously difficult to taxonomize because they may be grouped differently depending on where they are used, how they are used, what they look like, where they are sourced, how they are funded, whether they are part of another machine, and how they are maintained.

In addition, different users may have different definitions for seemingly similar phenomena. For example, a genetic condition may seem easy to define at first, but even doctors within the same specialty may disagree about whether laboratory testing is necessary to identify a condition. One might be tempted to create taxonomies based on data values, but taxonomies are about how the data is used, not about the data values themselves. It is the users who create hierarchies within the data, and there may be multiple different hierarchies within the same data sets when seen from different user perspectives. Taxonomies are then an entirely human construct, and as such a degree of subjectivity seems inevitable when creating them.

Is there a way to automate the process?

Tackling Taxonomy Challenges with AI

Data Queries to the Rescue

The solution may literally be under data analysts’ noses. Every day, analysts who query data are communicating how they think about the data. Enterprises typically have technology that allows one to view historical queries across many users. The selected, grouped, and partitioned data can be read as clues to what employees and customers consider to be categorically similar, or subtypes of the same category. Let’s consider how an artificial intelligence (AI) algorithm might facilitate the generation of a taxonomy based on how stakeholders query the data, and eliminate the subjectivity, confusion, and extensive collaborative effort.

Generating Taxonomy on the Fly

Suppose there is a list of queries generated by analysts over many data sources. One might use these queries to construct a hierarchy of the data based on the group by and partition statements in the queries. However, there might be relatively few unique group by statements. For this blog, let’s consider building the taxonomy based only on the fields in the select statements. The hierarchy would then be generated based on the assumptions listed to the right.

Hierarchy Assumptions

- More fields in the search/filter/where clause of a query indicate a more specific search for records

- Classes or nodes in the taxonomy are defined as the set of fields within a select statement

- A child node is a query that contains a set of fields in the select statement that is a superset of fields in a parent query

Based on the hierarchy assumptions, one might note that key structures in a correctly constructed database do define a hierarchy within a domain the data represents via allowing table connections through foreign keys. However, this hierarchy effectively represents just one possible version of taxonomy, and it is not necessarily going to serve the needs of all the data users.

Let’s Dig a Little Deeper

Data Set Comparisons

Let’s compare using data sets from Improving text-to-sql evaluation methodology¹ and the Spider data set from Spider: A large-scale human-labeled dataset for complex and cross-domain semantic parsing and text-to-sql task² to determine whether our idea and assumptions lead to taxonomies that make sense.

The data sets consist of free-text questions with the corresponding SQL queries used to answer them. These questions and queries are derived from a wide range of fields, including academia, geography, restaurants, IMDB, Yelp, and WikiSQL among others.

Applying these assumptions to the text-to-SQL data set, we immediately see several examples of where this strategy seems to provide a foundation for building a taxonomy. Let’s look at how this might work for different data sets.

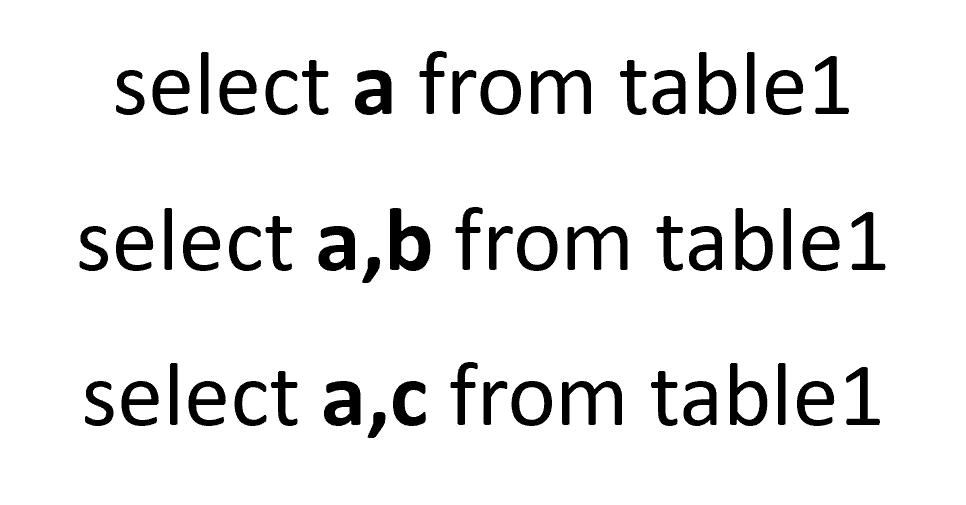

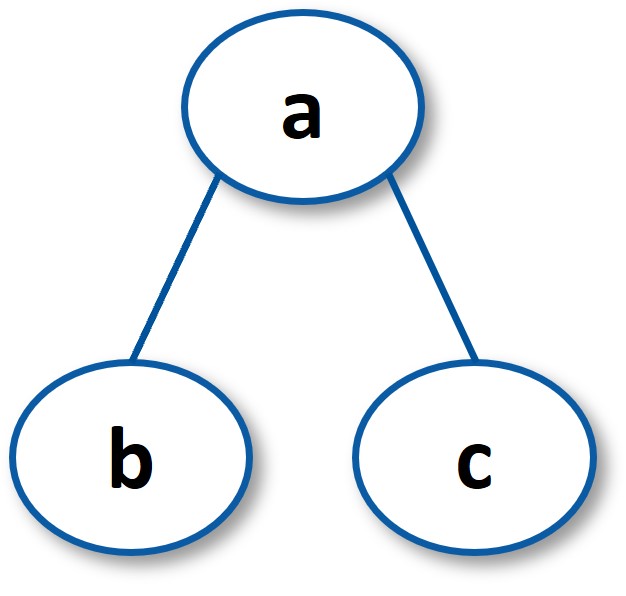

For example, suppose you have the following three select statements:

In the resulting tree, the queries containing a,b and a,c are more specific than the ones that include only a

Course Data Set

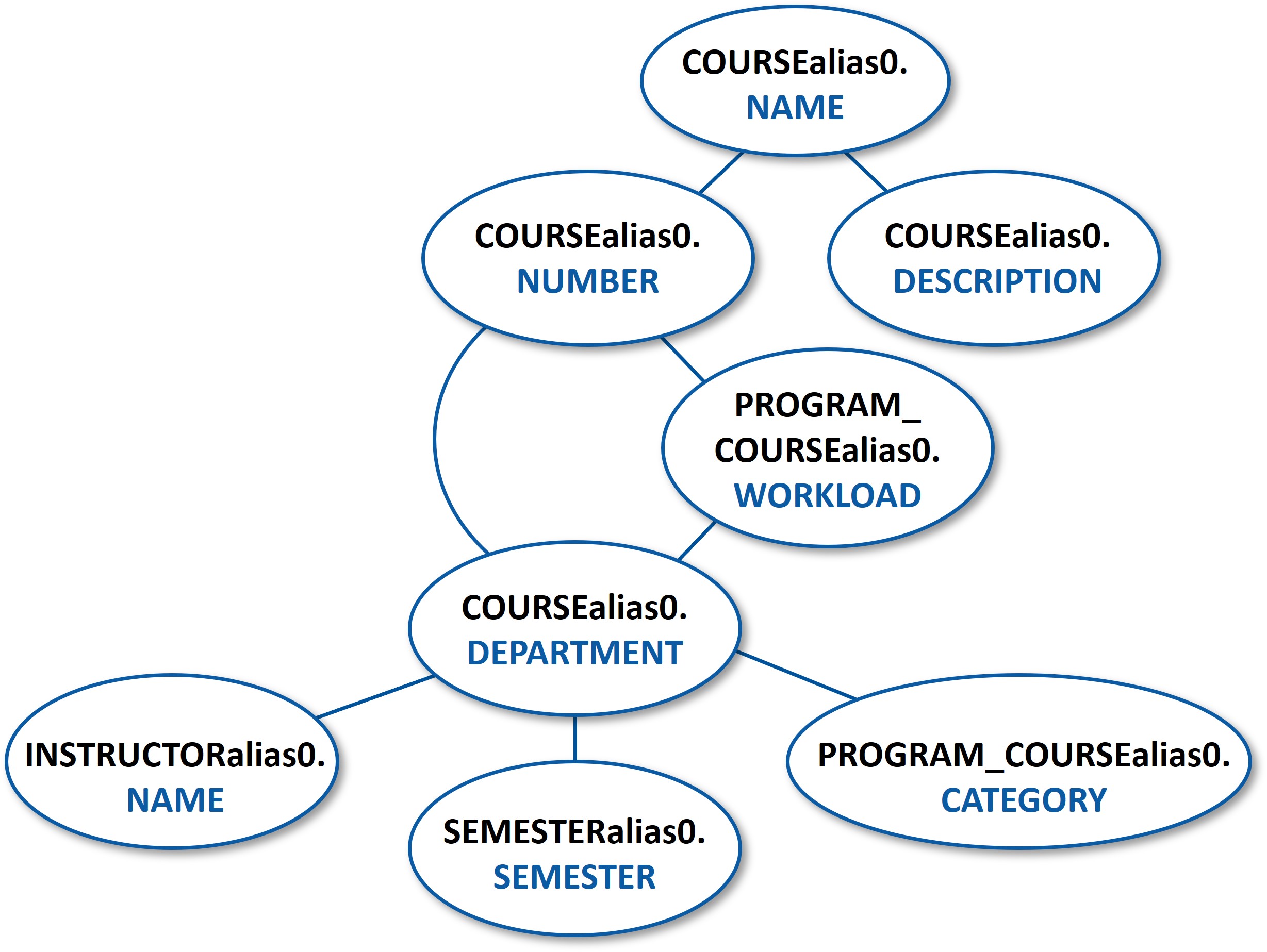

The following taxonomy was produced from queries on course information at the University of Michigan using fictional course records. Throughout these examples, nodes are labeled according to the additional fields introduced in the child node, and data fields are denoted as TABLE NAME:FIELD NAME to explicitly identify the TABLE in which a FIELD resides. For instance, the COURSEalias0.NUMBER node represents a query where NUMBER and NAME are queried from the COURSEalias0 table. The COURSEalias0.DEPARTMENT node in this tree represents a query where NAME, NUMBER and DEPARTMENT fields are queried from the COURSEalias0 table.

The resultant hierarchy seems plausible. The course name encompasses the description and number, where the course numbers are divided among departments and their workloads. The department is further divided among categories, names, and semesters. As indicated by the position of PROGRAM_COURSEalias0.WORKLOAD, this may not be the final taxonomy, but it reveals how an analyst views related concepts and their specificity. This version of the taxonomy has an additional advantage of being fast to generate in contrast to the traditional semantic models and ontologies that are typically time consuming to produce and generate consensus.

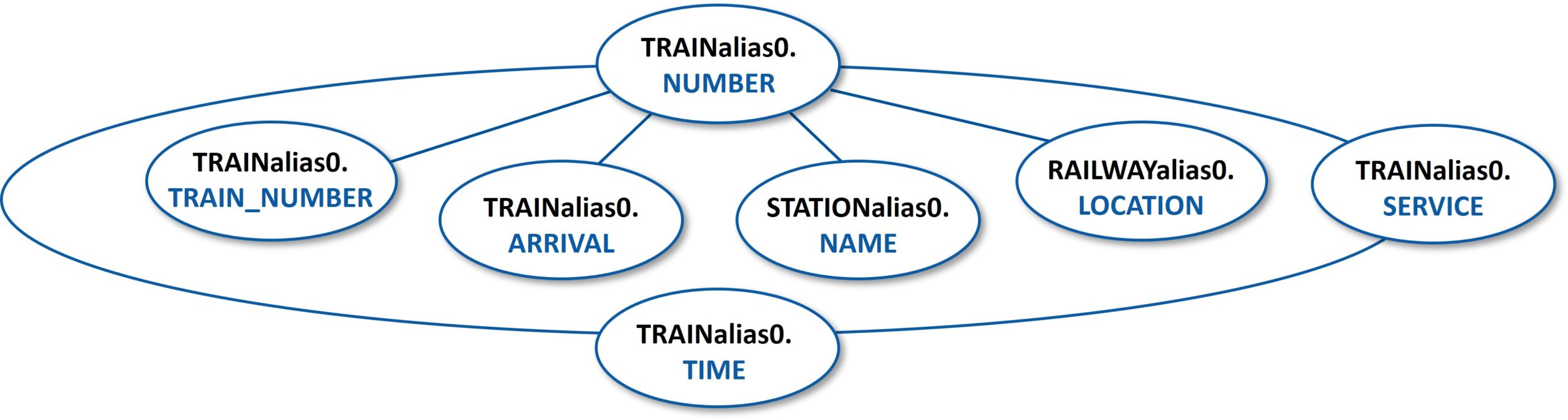

Train Data Set

Using the same method and a different subset of the data, we find the following hierarchy involving trains. Note the train time is dependent on the name of the train as well as the service.

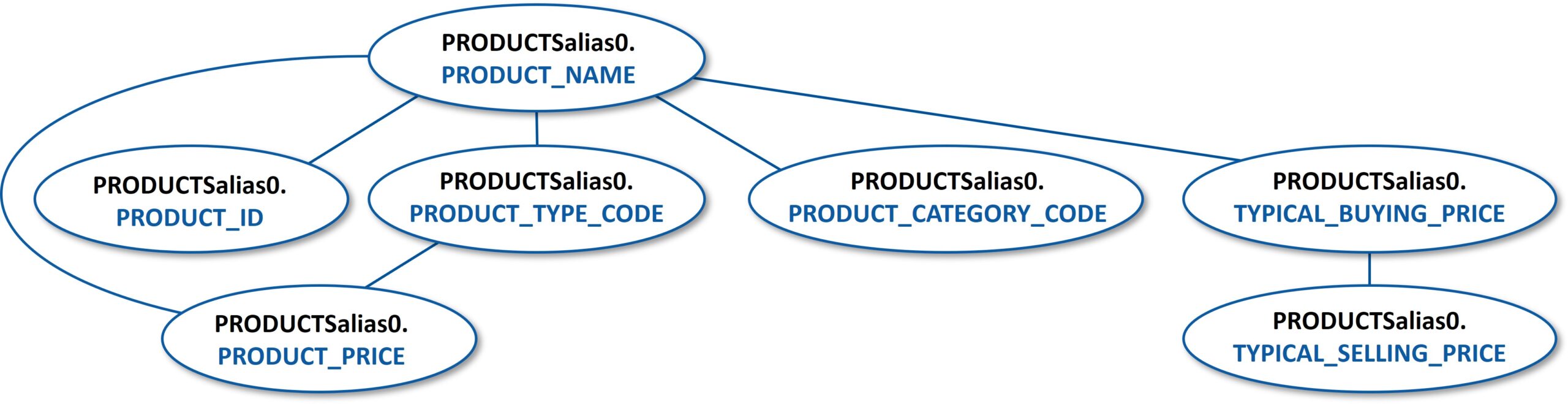

Consumer Products Data Set

For consumer products, the hierarchy constructed in this manner also makes sense. The product price is dependent on the product name and product type. Meanwhile, the selling price and buying price is reflective of the priorities of the analyst, with the selling price being derivative of the buying price. For example, if a customer was performing these queries, one could imagine these to be flipped in the hierarchy.

Human-Labeled Data Set

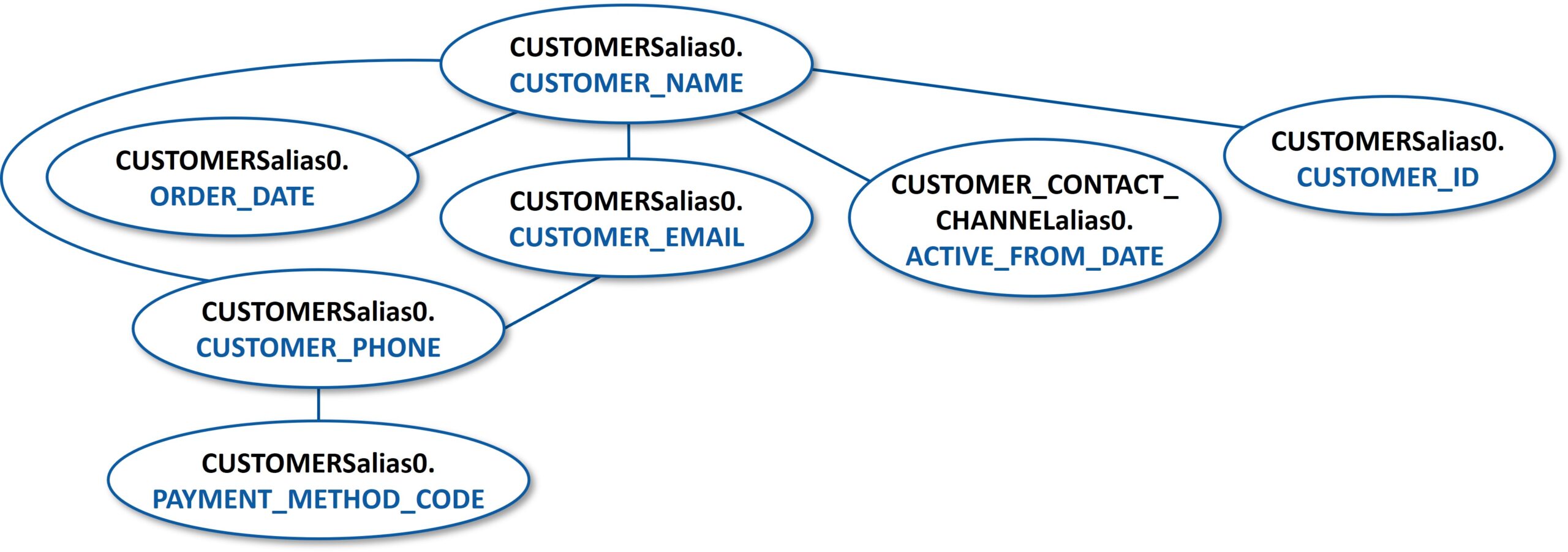

In other diagrams, we see some room for improvement—the interpretation of this diagram is less obvious. For instance, this taxonomy around queries on customer information from the large human-labeled Spider data set is challenging to interpret.

How Generated Taxonomies Can Be Used

1. First Step Toward Data Governance

The method we propose is fast and easy to use, and as such lends itself naturally to being the first approach one might try when faced with a new set of data that does not yet have a taxonomy, or the existing taxonomy is too limited. This method will create a proposed structure of the data that may or may not be accepted into the final data governance scheme, however it should provide a useful indicator of how the data might be organized and structured to be of use for diverse sets of users.

2. Starting Point for Developing Taxonomy

Our proposal offers an automated, data-driven approach to taxonomy creation. The method provides a good starting point for developing a taxonomy in an automated way, as it reflects how people who use the data view the data. Of course, there is obvious room for improvement. For instance, incorporating statistical methods might be used to resolve conflicting queries or sets of queries that reflect different taxonomic schemes.

3. Considerations for Hierarchical Granularity

The granularity and exhaustive nature of the hierarchies are limited by whatever queries are available. This can be considered as a strength as much as a limitation, as the resulting taxonomies express only what the users of the data reveal through their interactions with the data. However, the method outlined here remains a powerful tool to glean objective insights into how an enterprise thinks about its data.

We Invite You to Do Your Own Deep Dive

Downloadable Content Available

We used the Anytree Python toolkit to construct these trees. This package was helpful for assigning parents and children, but substantial coding was required to assign relationships. The code is contained in a Jupyter Notebook with each node being a list of fields in a query. The children are assumed to contain a superset of the parent fields. The create_trees2 function within the notebook constructs the keys recursively and generates visualizations similar to the examples in this article.

Download the sample code from our public GitHub repository and let us know what you think!

Reach out to Brian Connolly for questions or further discussion

About Brian

Brian Connolly, PhD, Senior Data Scientist/Engineer. Brian is a Senior Consultant at Illumination works and an experimental high energy physics PhD turned data science and analytics professional. Over the past 10 years Brian has been contributing his data science expertise to industries as diverse as government, healthcare, retail/e-commerce, and AgTech. Brian is well-versed in many aspects of data science and is particularly interested in natural language processing, Bayesian statistics, and MLOps/data engineering. To learn more about Brian, check out is profile on LinkedIn.

⇒ For more information about how our Data Science Team can help with your organization’s data challenges, contact Janette Steets, Director of Data Science, Illumination Works.

⇒ If you found this interesting, you may like our article on Maximizing the Full Potential of AI with Performance and Scalability.

References

¹ Finegan-Dollak, Catherine, et al. “Improving text-to-sql evaluation methodology.” arXiv preprint arXiv:1806.09029 (2018)

² Yu, Tao, et al. “Spider: A large-scale human-labeled dataset for complex and cross-domain semantic parsing and text-to-sql task.” arXiv preprint arXiv:1809.08887 (2018)

About Illumination Works

Illumination Works is a trusted technology partner in user-centric digital transformation, delivering impactful business results to clients through a wide range of services including big data information frameworks, data science, data visualization, and application/cloud development, all while focusing the approach on the end-user perspective. Established in 2006, ILW has primary offices in Dayton and Cincinnati, Ohio, as well as physical operations in Utah and the National Capital Region. In 2020, Illumination Works adopted a hybrid work model and currently has employees in 15+ states and is actively recruiting.